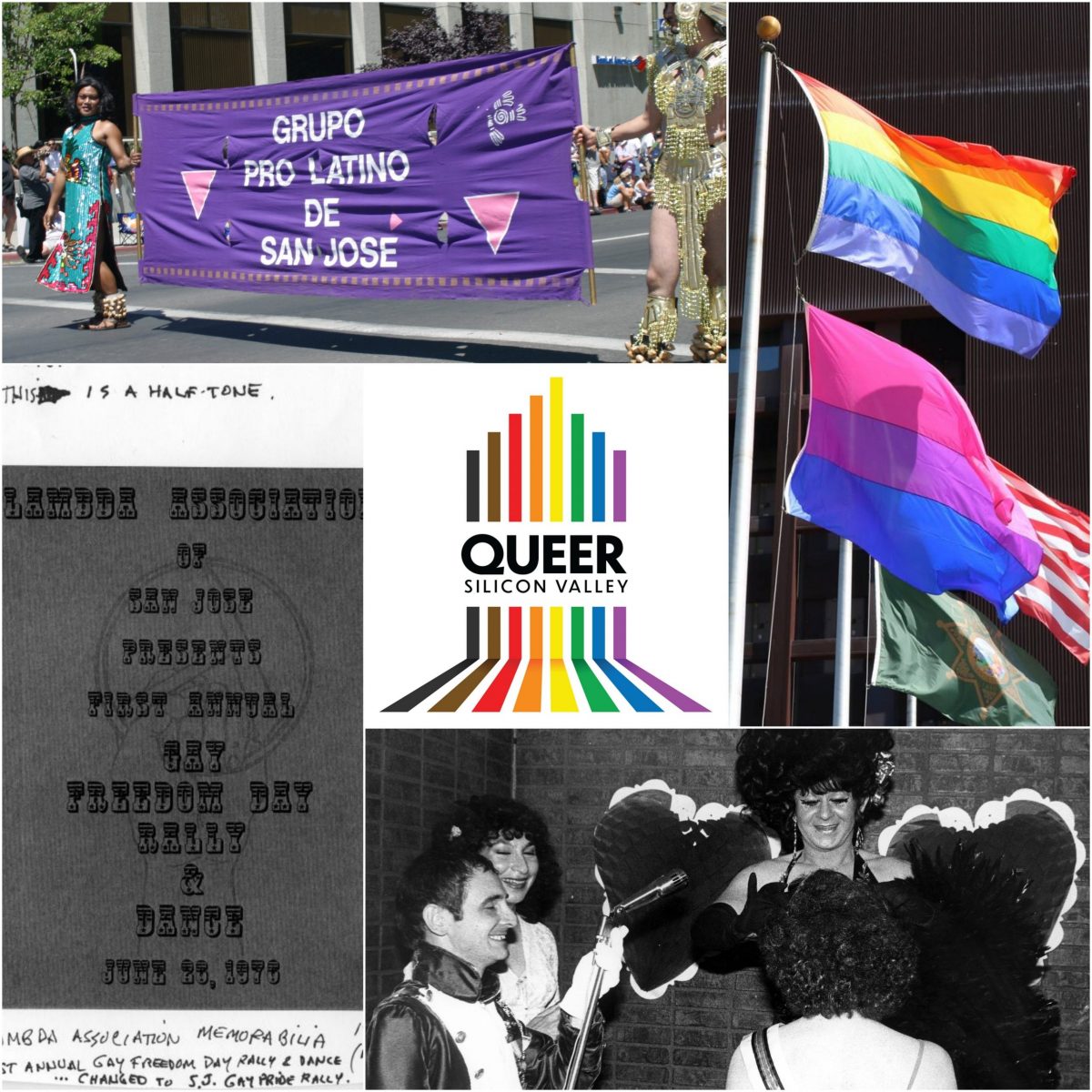

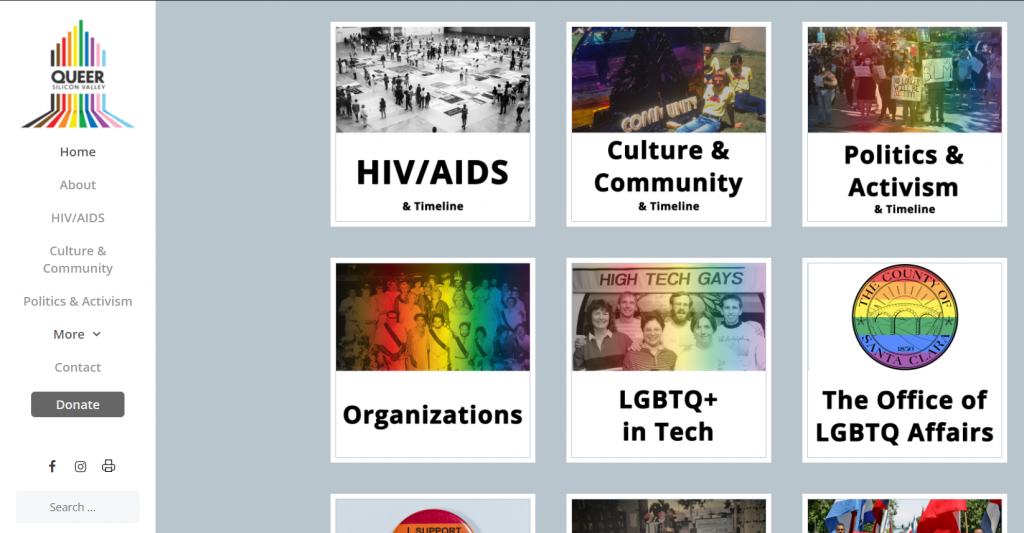

Freelance project working with a small team to organize an online history website about area queer communities over the last 50 years.

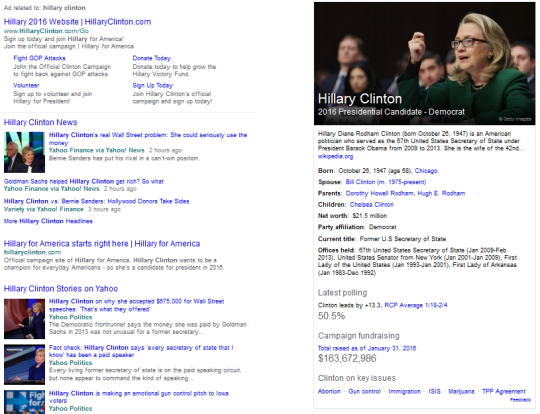

I was hired as an independent contractor to support the development and launch of a local history website. Originally intended as an in-person exhibit, QueerSiliconValley.org, a website documenting the history and culture of LGBTQ+ communities in and around Santa Clara County, California, was developed and launched in the summer and fall of 2020. Ken Yeager, BAYMEC Community Foundation’s Executive Director, spearheaded the effort and hired a small team of SJSU students to assist. Yeager’s “garage full of stuff” and a shared Google Drive was nowhere near exhibit-ready when we started. My role included project coordination, content wrangling, and helping set up the website.

Planning and communication via email alone quickly became untenable. During the early task breakdown phase, my teammates and I used a project management tool to assign and prioritize actions and research, but without the buy-in of our lead, the tool was abandoned to more lightweight methods. Part of the problem was that our lead would routinely communicate different requests and concerns to individual team members. To ensure the team was communicating those updates with each other, we established a private group chat, and I maintained a list of tasks related to whatever piece(s) of the project were active at a given time, along with who was responsible for them. I would also send an email as needed with this list as a status update. Team rapport was built via chat and Zoom meetings and was critical not only to our success, but social support during a sometimes-difficult process.

Each element of the project had its own tasking system, usually in Google Sheets, for managing notes, metadata, and status. This process became even more critical once the project hired a developer to build a custom WordPress site for us, meaning we had very specific content structure requirements to meet, as well as a needing a central place to note, report, and manage bugs and requests.

Throughout the project, I advocated for language and content changes to ensure the site was as inclusive as possible, such as including a content warning before stories involving violence (approved). I also helped the team learn some of the tech tools we used by creating a WordPress tips and tricks document and personally training our lead on how to navigate the site’s admin tools. I created a style guide, worked with our website developer to take ownership of several front-end display issues and fixes, and supported outreach and marketing efforts by creating slides, a media coverage page, and a ‘social sharing’ category. I did not stick to the letter of the project brief–if such a brief ever existed–and routinely offered suggestions and found ways to make things work.

Although I have not been actively involved the website management since December 2020, I know that new content being added has a clear place and format thanks to my efforts, and the team is empowered to carry out the work. Furthermore, the organizational systems I helped put in place set Ken Yeager and the History San Jose team up for success with an in-person exhibit opening mid-2021. It was by no means a perfect process–what is?–but having access to a variety of management theories and ideas meant I had ample tools to deploy as needed to keep things moving.